|

サイズ: 30983

コメント:

|

サイズ: 30866

コメント:

|

| 削除された箇所はこのように表示されます。 | 追加された箇所はこのように表示されます。 |

| 行 737: | 行 737: |

| === GAMESSジョブ === ==== シングルジョブ ==== 以下に,実行スクリプト例を示します. |

=== GAMESS Job === ==== Single Job ==== The sample script is as follows. |

| 行 756: | 行 756: |

| * exam01.inpは/common/gamess-2012.r2-serial/tests/standard/にあります. === MPQCジョブ === サンプル入力ファイル(h2o.in) |

* exam01.inp exists in /common/gamess-2012.r2-serial/tests/standard/. === MPQC Job === Sample input file (h2o.in) |

| 行 771: | 行 770: |

| ==== 並列ジョブ ==== 以下に,実行スクリプト例を示します. |

==== Parallel Jobs ==== The sample script is as follows. |

| 行 790: | 行 789: |

| === AMBERジョブ === サンプル入力ファイル(gbin-sander) |

=== AMBER Job === Sample input file (gbin-sander) |

| 行 807: | 行 806: |

| サンプル入力ファイル(gbin-pmemd) | Sample input file (gbin-pmemd) |

Using Cluster Systems

This section explains the usage methods common among the Next Generation Simulation Technology Education and the Wide-Area Coordinated Cluster System for Education and Research. See the page for each system for details of specific functions.

目次

Logging In

Submitting Jobs

Create the Torque script and submit the job as follows using the qsub command.

$ qsub –q quque_name script_name

For example, enter the following to submit a job to rchq, a research job queue.

$ qsub -q rchq script_name

In the script, specify the number of processors per node to request as follows.

#PBS -l nodes=no_of_nodes:ppn=processors_per_node

Example: To request 1 node and 16 processors per node

#PBS -l nodes=1:ppn=16

Example: To request 4 nodes and 16 processors per node

#PBS -l nodes=4:ppn=16

To execute a job with the specified memory capacity, add the following codes to the script.

#PBS -l nodes=no_of_nodes:ppn=processors_per_node,mem=memory_per_job

Example: To request 1 node, 16 processors per node, and 16GB memory per job

#PBS -l nodes=1:ppn=16,mem=16gb

To execute a job with the specified calculation node, add the following codes to the script.

#PBS -l nodes=nodeA:ppn=processors_for_nodeA+nodeB:ppn= processors_for_nodeB,・・・

Example: To request csnd00 and csnd01 as the nodes and 16 processors per node

#PBS -l nodes=csnd00:ppn=16+csnd01:ppn=16

* From csnd00 to csnd27 are the calculation nodes (see Hardware Configuration under System Configuration).

* csnd00 and csnd01 are equipped with Tesla K20X.

To execute a job on a calculation node with GPGPU, add the following codes to the script.

#PBS -l nodes=1:GPU:ppn=1

To execute a job in a specified duration of time, add the following codes to the script.

#PBS -l walltime=hh:mm:ss

Example: To request a duration of 336 hours to execute a job

#PBS -l walltime=336:00:00

The major options of the qsub command are as follows.

Option |

Example |

Description |

-e |

-e filename |

Outputs the standard error to a specified file. When the -e option is not used, the output file is created in the directory where the qsub command was executed. The file name convention is job_name.ejob_no. |

-o |

-o filename |

Outputs the standard output to the specified file. When the -o option is not used, the output file is created in the directory where the qsub command was executed. The file name convention is job_name.ojob_no. |

-j |

-j join |

Specifies whether to merge the standard output and the standard error to a single file or not. |

-q |

-q destination |

Specifies the queue to submit a job. |

-l |

-l resource_list |

Specifies the resources required for job execution. |

-N |

-N name |

Specifies the job name (up to 15 characters). If a job is submitted via a script, the script file name is used as the job name by default. Otherwise, STDIN is used. |

-m |

-m mail_events |

Notifies the job status by an email. |

-M |

-M user_list |

Specifies the email address to receive the notice. |

Sample Script: When Using an Intel MPI and an Intel Compiler

### sample

#!/bin/sh

#PBS -q rchq

#PBS -l nodes=1:ppn=16

MPI_PROCS=`wc -l $PBS_NODEFILE | awk '{print $1}'`

cd $PBS_O_WORKDIR

mpirun -np $MPI_PROCS ./a.out* The target queue (either eduq or rchq) to submit the job can be specified in the script.

Sample Script: When Using an OpenMPI and an Intel Compiler

### sample

#!/bin/sh

#PBS -q rchq

#PBS -l nodes=1:ppn=16

MPI_PROCS=`wc -l $PBS_NODEFILE | awk '{print $1}'`

module unload intelmpi.intel

module load openmpi.intel

cd $PBS_O_WORKDIR

mpirun -np $MPI_PROCS ./a.out* The target queue (either eduq or rchq) to submit the job can be specified in the script.

Sample Script: When Using an MPICH2 and an Intel Compiler

### sample

#!/bin/sh

#PBS -q rchq

#PBS -l nodes=1:ppn=16

MPI_PROCS=`wc -l $PBS_NODEFILE | awk '{print $1}'`

module unload intelmpi.intel

module load mpich2.intel

cd $PBS_O_WORKDIR

mpirun -np $MPI_PROCS -iface ib0 ./a.out* When using MPICH2, always specify the -iface option.

* The target queue (either eduq or rchq) to submit the job can be specified in the script.

Sample Script: When Using an OpenMP Program

### sample #!/bin/sh #PBS -l nodes=1:ppn=8 #PBS -q eduq export OMP_NUM_THREADS=8 cd $PBS_O_WORKDIR ./a.out

Sample Script: When Using an MPI/OpenMP Hybrid Program

### sample #!/bin/sh #PBS -l nodes=4:ppn=8 #PBS -q eduq export OMP_NUM_THREADS=8 cd $PBS_O_WORKDIR sort -u $PBS_NODEFILE > hostlist mpirun -np 4 -machinefile ./hostlist ./a.out

Sample Script: When Using a GPGPU Program

### sample #!/bin/sh #PBS -l nodes=1:GPU:ppn=1 #PBS -q eduq cd $PBS_O_WORKDIR module load cuda-5.0 ./a.out

ANSYS Multiphysics Job

Single Job

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq module load ansys14.5 cd $PBS_O_WORKDIR ansys145 -b nolist -p AA_T_A -i vm1.dat -o vm1.out -j vm1

* vm1.dat exists in /common/ansys14.5/ansys_inc/v145/ansys/data/verif.

Parallel Jobs

The sample script is as follows.

Example: When using Shared Memory ANSYS

### sample #!/bin/sh #PBS -l nodes=1:ppn=4 #PBS -q eduq module load ansys14.5 cd $PBS_O_WORKDIR ansys145 -b nolist -p AA_T_A -i vm141.dat -o vm141.out -j vm141 -np 4

Example: When using Distributed ANSYS

### sample #!/bin/sh #PBS -l nodes=2:ppn=2 #PBS -q eduq module load ansys14.5 cd $PBS_O_WORKDIR ansys145 -b nolist -p AA_T_A -i vm141.dat -o vm141.out -j vm141 -np 4 -dis

* vm141.dat exists in /common/ansys14.5/ansys_inc/v145/ansys/data/verif

ANSYS CFX Job

Single Job

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq module load ansys14.5 cd $PBS_O_WORKDIR cfx5solve -def StaticMixer.def

* StaticMixer.def exists in /common/ansys14.5/ansys_inc/v145/CFX/examples.

Parallel Jobs

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=4 #PBS -q eduq module load ansys14.5 cd $PBS_O_WORKDIR cfx5solve -def StaticMixer.def -part 4 -start-method 'Intel MPI Local Parallel'

*StaticMixer.def exits in /common/ansys14.5/ansys_inc/v145/CFX/examples.

ANSYS Fluent Job

Single Job

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq module load ansys14.5 cd $PBS_O_WORKDIR fluent 3ddp -g -i input-3d > stdout.txt 2>&1

Parallel Jobs

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=4 #PBS -q eduq module load ansys14.5 cd $PBS_O_WORKDIR fluent 3ddp -g -i input-3d -t4 -mpi=intel > stdout.txt 2>&1

ANSYS LS-DYNA Job

Single Job

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq module load ansys14.5 cd $PBS_O_WORKDIR lsdyna145 i=hourglass.k memory=100m

* For hourglass.k, see LS-DYNA Examples (http://www.dynaexamples.com/).

ABAQUS Job

Single Job

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq module load abaqus-6.12-3 cd $PBS_O_WORKDIR abaqus job=1_mass_coarse

*1_mass_coarse.inp exits in /common/abaqus-6.12-3/6.12-3/samples/job_archive/samples.zip.

PHASE Job

Parallel Jobs (phase command: SCF calculation)

The sample script is as follows.

### sample #!/bin/sh #PBS -q eduq #PBS -l nodes=2:ppn=2 cd $PBS_O_WORKDIR module load phase-11.00-parallel mpirun -np 4 phase

* For PHASE jobs, a set of I/O files are defined as file_names.data.

* Sample input files are available at /common/phase-11.00-parallel/sample/Si2/scf/.

Single Job (ekcal command: Band calculation)

The sample script is as follows.

### sample #!/bin/sh #PBS -q eduq #PBS -l nodes=1:ppn=1 cd $PBS_O_WORKDIR module load phase-11.00-parallel ekcal

* For ekcal jobs, a set of I/O files are defined as file_names.data.

* Sample input files are available at /common/phase-11.00-parallel/sample/Si2/band/.

* (Prior to using the sample files under Si2/band/, execute the sample file in ../scf/ to create nfchgt.data.)

UVSOR Job

Parallel Jobs (epsmain command: Dielectric constant calculation)

The sample script is as follows.

### sample #!/bin/sh #PBS -q eduq #PBS -l nodes=2:ppn=2 cd $PBS_O_WORKDIR module load uvsor-v342-parallel mpirun -np 4 epsmain

* For epsmain jobs, a set of I/O files are defined as file_names.data.

* Sample input files are available at /common/uvsor-v342-parallel/sample/electron/Si/eps/.

* (Prior to using the sample files under Si/eps/, execute the sample file in ../scf/ to create nfchgt.data.)

OpenMX Job

Parallel Jobs

The sample script is as follows.

### sample #!/bin/bash #PBS -l nodes=2:ppn=2 cd $PBS_O_WORKDIR module load openmx-3.6-parallel export LD_LIBRARY_PATH=/common/intel-2013/composer_xe_2013.1.117/mkl/lib/intel64:$LD_LIBRARY_PATH mpirun -4 openmx H2O.dat

* H2O.dat exists in /common/openmx-3.6-parallel/work/.

* The following line must be added to H2O.dat.

* DATA.PATH /common/openmx-3.6-parallel/DFT_DATA11.

GAUSSIAN Job

Sample input file (methane.com) e

%NoSave %Mem=512MB %NProcShared=4 %chk=methane.chk #MP2/6-31G opt methane 0 1 C -0.01350511 0.30137653 0.27071342 H 0.34314932 -0.70743347 0.27071342 H 0.34316773 0.80577472 1.14436492 H 0.34316773 0.80577472 -0.60293809 H -1.08350511 0.30138971 0.27071342

Single Job

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq module load gaussian09-C.01 cd $PBS_O_WORKDIR g09 methane.com

Parallel Jobs

The sample script is as follows.

### sample #!/bin/sh #PBS -l nodes=1:ppn=4,mem=3gb,pvmem=3gb #PBS -q eduq cd $PBS_O_WORKDIR module load gaussian09-C.01 g09 methane.com

NWChem Job

Sample input file (h2o.nw)

echo start h2o title h2o geometry units au O 0 0 0 H 0 1.430 -1.107 H 0 -1.430 -1.107 end basis * library 6-31g** end scf direct; print schwarz; profile end task scf

Parallel Jobs

The sample script is as follows.

### sample #!/bin/sh #PBS -q eduq #PBS -l nodes=2:ppn=2 export NWCHEM_TOP=/common/nwchem-6.1.1-parallel cd $PBS_O_WORKDIR module load nwchem.parallel mpirun -np 4 nwchem h2o.nw

GAMESS Job

Single Job

The sample script is as follows.

### sample #!/bin/bash #PBS -q eduq #PBS -l nodes=1:ppn=1 module load gamess-2012.r2-serial mkdir -p /work/$USER/scratch/$PBS_JOBID mkdir -p $HOME/scr rm -f $HOME/scr/exam01.dat rungms exam01.inp 00 1

* exam01.inp exists in /common/gamess-2012.r2-serial/tests/standard/.

MPQC Job

Sample input file (h2o.in)

% HF/STO-3G SCF water

method: HF

basis: STO-3G

molecule:

O 0.172 0.000 0.000

H 0.745 0.000 0.754

H 0.745 0.000 -0.754

Parallel Jobs

The sample script is as follows.

### sample #!/bin/sh #PBS -q rchq #PBS -l nodes=2:ppn=2 MPQCDIR=/common/mpqc-2.4-4.10.2013.18.19 cd $PBS_O_WORKDIR module load mpqc-2.4-4.10.2013.18.19 mpirun -np 4 mpqc -o h2o.out h2o.in

AMBER Job

Sample input file (gbin-sander)

short md, nve ensemble &cntrl ntx=5, irest=1, ntc=2, ntf=2, tol=0.0000001, nstlim=100, ntt=0, ntpr=1, ntwr=10000, dt=0.001, / &ewald nfft1=50, nfft2=50, nfft3=50, column_fft=1, / EOF

Sample input file (gbin-pmemd)

short md, nve ensemble &cntrl ntx=5, irest=1, ntc=2, ntf=2, tol=0.0000001, nstlim=100, ntt=0, ntpr=1, ntwr=10000, dt=0.001, / &ewald nfft1=50, nfft2=50, nfft3=50, / EOF

シングルジョブ

以下に,実行スクリプト例を示します.

例:sanderを用いる場合

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq module load amber12-serial cd $PBS_O_WORKDIR sander -i gbin-sander -p prmtop -c eq1.x

*prmtop, eq1.xは/common/amber12-parallel/test/4096watにあります.

例:pmemdを用いる場合

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq module load amber12-serial cd $PBS_O_WORKDIR pmemd -i gbin-pmemd -p prmtop -c eq1.x

*prmtop, eq1.xは/common/amber12-parallel/test/4096watにあります.

並列ジョブ

以下に,実行スクリプト例を示します.

例:sanderを用いる場合

### sample #!/bin/sh #PBS -l nodes=2:ppn=4 #PBS -q eduq module unload intelmpi.intel module load openmpi.intel module load amber12-parallel cd $PBS_O_WORKDIR mpirun -np 8 sander.MPI -i gbin-sander -p prmtop -c eq1.x

*prmtop, eq1.xは/common/amber12-parallel/test/4096watにあります.

例:pmemd を用いる場合

### sample #!/bin/sh #PBS -l nodes=2:ppn=4 #PBS -q eduq module unload intelmpi.intel module load openmpi.intel module load amber12-parallel cd $PBS_O_WORKDIR mpirun -np 8 pmemd.MPI -i gbin-pmemd -p prmtop -c eq1.x

*prmtop, eq1.xは/common/amber12-parallel/test/4096watにあります.

CONFLEXジョブ

サンプル入力ファイル(methane.mol)

Sample

Chem3D Core 13.0.009171314173D

5 4 0 0 0 0 0 0 0 0999 V2000

0.1655 0.7586 0.0176 H 0 0 0 0 0 0 0 0 0 0 0 0

0.2324 -0.3315 0.0062 C 0 0 0 0 0 0 0 0 0 0 0 0

-0.7729 -0.7586 0.0063 H 0 0 0 0 0 0 0 0 0 0 0 0

0.7729 -0.6729 0.8919 H 0 0 0 0 0 0 0 0 0 0 0 0

0.7648 -0.6523 -0.8919 H 0 0 0 0 0 0 0 0 0 0 0 0

2 1 1 0

2 3 1 0

2 4 1 0

2 5 1 0

M END

シングルジョブ

以下に,実行スクリプト例を示します.

### sample #!/bin/sh #PBS -l nodes=1:ppn=1 #PBS -q eduq c cd $PBS_O_WORKDIR flex7a1.ifc12.Linux.exe -par /common/conflex7/par methane

並列ジョブ

実行スクリプトを作成しqsubコマンドよりジョブを投入します. 以下に,実行スクリプト例を示します.

### sample #!/bin/sh #PBS -l nodes=1:ppn=8 #PBS -q eduq module load conflex7 cd $PBS_O_WORKDIR mpirun -np 8 flex7a1.ifc12.iMPI.Linux.exe -par /common/conflex7/par methane

MATLABジョブ

サンプル入力ファイル(matlabdemo.m)

% Sample

n=6000;X=rand(n,n);Y=rand(n,n);tic; Z=X*Y;toc

% after finishing work

switch getenv('PBS_ENVIRONMENT')

case {'PBS_INTERACTIVE',''}

disp('Job finished. Results not yet saved.');

case 'PBS_BATCH'

disp('Job finished. Saving results.')

matfile=sprintf('matlab-%s.mat',getenv('PBS_JOBID'));

save(matfile);

exit

otherwise

disp([ 'Unknown PBS environment ' getenv('PBS_ENVIRONMENT')]);

end

シングルジョブ

以下に,実行スクリプト例を示します.

### sample #!/bin/bash #PBS -l nodes=1:ppn=1 #PBS -q eduq module load matlab-R2012a cd $PBS_O_WORKDIR matlab -nodisplay -nodesktop -nosplash -nojvm -r "maxNumCompThreads(1); matlabdemo"

並列ジョブ

以下に,実行スクリプト例を示します.

### sample #!/bin/bash #PBS -l nodes=1:ppn=4 #PBS -q eduq module load matlab-R2012a cd $PBS_O_WORKDIR matlab -nodisplay -nodesktop -nosplash -nojvm -r "maxNumCompThreads(4); matlabdemo"

CASTEP/DMol3ジョブ

本学スパコンを利用してMaterials Studio CASTEP/DMol3を実行する場合,

① Materials Studio Visualiserからスパコンに対しジョブを投入する(Materials Studio2016のみ対応)

② 入力ファイルをWinscp等でスパコンに転送し「qsub」コマンドによりジョブを投入する

の二通りがあります.ここでは②について説明します.②で実行する場合,ジョブスケジューラに与えるパラメータを変更することができます.以下では,CASTEPを例に説明します.

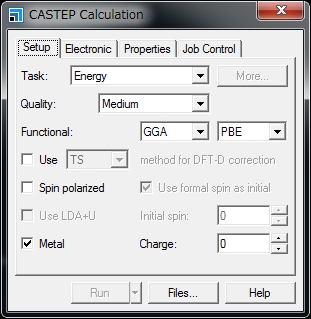

1. Materials Studio Visualizerで入力ファイルを作成します.

「CASTEP Calculation」ウィンドウの「Files...」をクリックします.

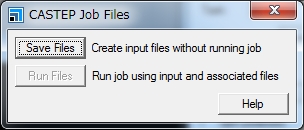

次に,「CASTEP Job Files」ウィンドウの「Save Files」をクリックします.

入力ファイルは

(任意のパス)\(プロジェクト名)_Files\Documents\(上記操作において作成されたフォルダ)\

に保存されます.例えば,acetanilide.xsdに対してCASTEPによりエネルギー計算を行う場合、「(上記操作において作成されたフォルダ)」は「acetanilide CASTEP Energy」となります.

また,「(任意のパス)」は,通常,「C:\Users\(アカウント名)\Documents\Materials Studio Projects」です.

作成されるフォルダの名前にはスペースが含まれます.スペースを削除するか,”_”などで置換してください.また,フォルダ名に日本語が含まれてはいけません.

2. Winscp等のファイル転送ソフトを利用し,作成した入力ファイルをフォルダごと本学スパコンへ転送してください.

3. 転送したフォルダ内に下記のような実行スクリプトを作成し,「qsub」コマンドによりジョブを投入してください.

### sample

#!/bin/sh

#PBS -l nodes=1:ppn=8,mem=24gb,pmem=3gb,vmem=24gb,pvmem=3gb

#PBS -l walltime=24:00:00

#PBS -q rchq

MPI_PROCS=`wc -l $PBS_NODEFILE | awk '{print $1}'`

cd $PBS_O_WORKDIR

cp /common/accelrys/cdev0/MaterialsStudio8.0/etc/CASTEP/bin/RunCASTEP.sh .

perl -i -pe 's/\r//' *

./RunCASTEP.sh -np $MPI_PROCS acetanilide* RunCASTEP.shの第3引数は,転送したフォルダ内のファイル名を指定してください.拡張子は必要ありません.

* 「ppn=」は,8以下,の値を指定してください.

* 経過時間制限値,メモリ利用容量制限値は必要に応じて変更できます.

* 「CASTEP Calculation」ウィンドウの”Job Control”タブにおける「Gateway location」「Queue」「Run in parallel on」の設定はコマンドライン実行に対し意味を持ちません.qsubコマンド時に利用する実行スクリプト内の設定が用いられます.

* 実行スクリプトは利用する計算機や計算モジュールにより異なります.下記の「実行スクリプト例」をご参照ください.

4. ジョブ終了後、計算結果ファイルをクライアントPCに転送し,Materials studio Visualizerを利用して閲覧してください.

共同利用に関する注意事項

Materials Studioのライセンスは,CASTEP:8本,Dmol3:8本,を所有しています.1ライセンスで8並列以下のジョブを1つ実行することができます.本ライセンスは共用です.他のユーザーと競合しないように留意の上,ご利用をお願いします.

実行スクリプト例

広域連携教育研究用クラスタシステムでMaterials Studio 8.0 CASTEPを実行する場合:

### sample

#!/bin/sh

#PBS -l nodes=1:ppn=8,mem=50000mb,pmem=6250mb,vmem=50000mb,pvmem=6250mb

#PBS -l walltime=24:00:00

#PBS -q wLrchq

MPI_PROCS=`wc -l $PBS_NODEFILE | awk '{print $1}'`

cd $PBS_O_WORKDIR

cp /common/accelrys/wdev0/MaterialsStudio8.0/etc/CASTEP/bin/RunCASTEP.sh .

perl -i -pe 's/\r//' *

./RunCASTEP.sh -np $MPI_PROCS acetanilide広域連携教育研究用クラスタシステムでMaterials Studio 8.0 DMOL3を実行する場合:

### sample

#!/bin/sh

#PBS -l nodes=1:ppn=8,mem=50000mb,pmem=6250mb,vmem=50000mb,pvmem=6250mb

#PBS -l walltime=24:00:00

#PBS -q wLrchq

MPI_PROCS=`wc -l $PBS_NODEFILE | awk '{print $1}'`

cd $PBS_O_WORKDIR

cp /common/accelrys/wdev0/MaterialsStudio8.0/etc/DMol3/bin/RunDMol3.sh .

perl -i -pe 's/\r//' *

./RunDMol3.sh -np $MPI_PROCS acetanilide広域連携教育研究用クラスタシステムでMaterials Studio 2016 CASTEPを実行する場合:

### sample

#!/bin/sh

#PBS -l nodes=1:ppn=8,mem=50000mb,pmem=6250mb,vmem=50000mb,pvmem=6250mb

#PBS -l walltime=24:00:00

#PBS -q wLrchq

MPI_PROCS=`wc -l $PBS_NODEFILE | awk '{print $1}'`

cd $PBS_O_WORKDIR

cp /common/accelrys/wdev0/MaterialsStudio2016/etc/CASTEP/bin/RunCASTEP.sh .

perl -i -pe 's/\r//' *

./RunCASTEP.sh -np $MPI_PROCS acetanilide広域連携教育研究用クラスタシステムでMaterials Studio 2016 DMOL3を実行する場合:

### sample

#!/bin/sh

#PBS -l nodes=1:ppn=8,mem=50000mb,pmem=6250mb,vmem=50000mb,pvmem=6250mb

#PBS -l walltime=24:00:00

#PBS -q wLrchq

MPI_PROCS=`wc -l $PBS_NODEFILE | awk '{print $1}'`

cd $PBS_O_WORKDIR

cp /common/accelrys/wdev0/MaterialsStudio2016/etc/DMol3/bin/RunDMol3.sh .

perl -i -pe 's/\r//' *

./RunDMol3.sh -np $MPI_PROCS acetanilide

ジョブの状態を確認する

ジョブ,キューの状態確認にはqstatコマンドを利用します.

(1) ジョブの状態表示

qstat -a

(2) キューの状態表示

qstat -Q

qstatコマンドの主なオプションを以下に示します.

オプション |

使用例 |

意味 |

-a |

-a |

すべてのキューイング・実行中のジョブを表示する. |

-Q |

-Q |

すべてのキューの状態を簡易表示する. |

-Qf |

-Qf |

すべてのキューの状態を詳細表示する. |

-q |

-q |

キューの制限値を表示する. |

-n |

-n |

ジョブに割り当てた計算ノードのホスト名を表示する. |

-r |

-r |

実行中のジョブのリストを表示させます. |

-i |

-i |

非実行中のジョブのリストを表示する. |

-u |

-u ユーザ名 |

指定したユーザのジョブのリストを表示する. |

-f |

-f ジョブID |

指定したジョブIDの詳細情報を表示する. |

投入したジョブをキャンセルする

ジョブのキャンセルにはqdelコマンドを使用します.

qdel jobID

job IDはqstatコマンドより確認してください.